Click Work

When we say "data" we mean "humans."

This week's course topic was chatbots, or put another way, any kind of program that takes words as input and gives you words as output: answers to questions, conversation, draft emails. I didn't get very far in the first assigned reading for the week, chapter 7 "Question Answering" of Natural Language Processing With Transfomers by Tunstall et al., without getting distracted by something really human.

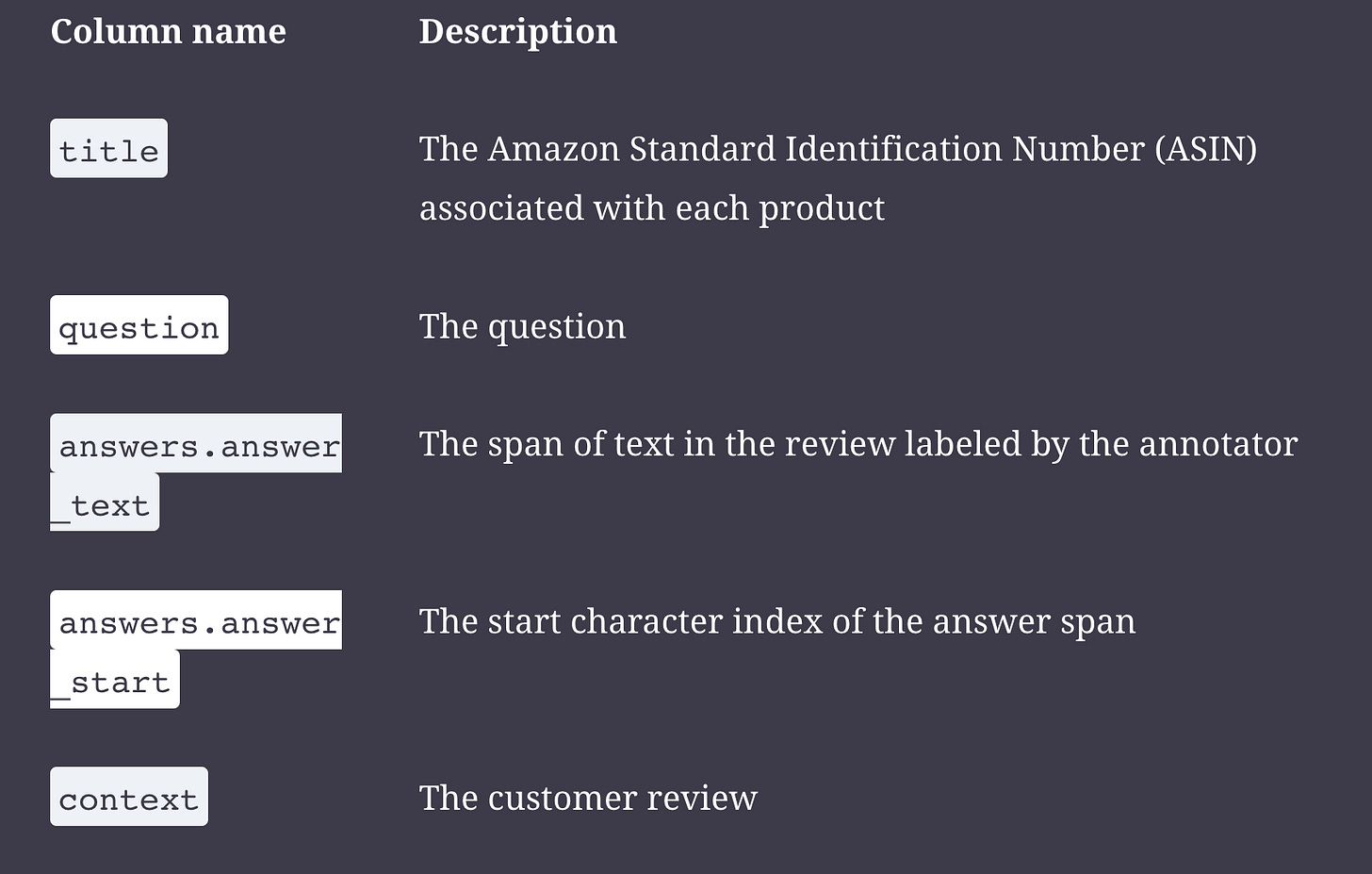

It started with the chapter's look into a data record in a question-answer (QA) dataset:

My eye first snagged on the use of Amazon Standard Identification number as a unique identifier, with an obligatory sigh of resignation that of course that was going to be the default source of truth for consumer goods. Still, for a dataset that hopes to be long-lived, this is placing a lot of faith in the idea that Amazon is going to maintain this directory over time.

But I mainly thought about the field of "answer_text" being defined as "span of text in the review labeled by the annotator." This is followed by the field "answer_start" which is "the start character index of the answer span"--the index, here, means the number of its position in the whole sequence of characters, which fun fact are almost always counted starting from zero.1 Both of these fields together conjured up an image in my mind of someone's cursor, clicking and dragging a bright color across a certain span of text, and underneath the interface, the program translating that cursor placement to an index position. There might have been multiple annotators, they might have then taken a mode of the start positions selected. But there's no dataset without hands all over it.

This was underscored by the authorial aside following our exploratory look at the dataset:

"Notice that the dataset is relatively small, with only 1,908 examples in total. This simulates a real-world scenario, since getting domain experts to label extractive QA datasets is labor-intensive and expensive. For example, the CUAD dataset for extractive QA on legal contracts is estimated to have a value of $2 million to account for the legal expertise needed to annotate its 13,000 examples!"2

I was reminded, not for the first time, of the work of someone who was a grad student in information at my doctoral institution while I was there too, Melissa Chalmers, who wrote her dissertation on labor and the infrastructure of digitization. I remember going to a presentation she gave, and that one of her slides was a screenshot of a page from Google books that has a human hand showing on it, not moved out of the way fast enough and caught by the relentless scanner.

The foundational datasets for QA are all human-annotated. But when you are a human data annotator, there's a specific word for you, which is "crowdworker." This is standard terminology for describing dataset creation. The "Stanford Question Answering Dataset (SQuAD) is a reading comprehension dataset, consisting of questions posed by crowdworkers on a set of Wikipedia articles." The SubjQA dataset we were examining in the chapter was created by "crowdworkers who highlight an answer span in the review."

We could have just saved four characters and called them "workers," eh? They are people doing a thing for someone else for money. They are doing a job--a pretty important job, if you believe investment capital. Why do we need to say they are "crowdworkers"? Is it to make it clear that you have no idea who they were and no way to find out? Is it to justify that they were probably paid actual pennies? Is it to suggest that it's okay to pay them pennies because it's "just clicking"? The SQuAD workers were doing more than that, though. They were coming up with questions that seemed both answerable and unanswerable for every text passage. Is it a way to say that they have no expertise whatsover? Clearly not, because they at least know what constitutes an answer to a question. It seems like "crowdworker" is doing a lot of work, here. It's trying to say there's this whole other category of work that is so vital we have to find a way to do it but also so menial we don't want to recognize it as work. It's a fig leaf of some kind--a way to cover up either that you don't really know who did your work for you or that you think the quality of work done this way should be flagged, somehow, but not enough to keep you from publishing your dataset.

Reaping what I google, I have also been by bombarded by ads for a company promising flexible gig work annotating data to all comers. They promise $20/hour for flexible, plentiful unlimited work

contributing to AI innovation

paid seven days after task completion, via PayPal only.

Assuming the company were quasi-legit (probably a pretty big assumption), I wonder how draconian the quotas are to earn that twenty bucks. Another theory is that their "assessment" for prospective annotators is actually the work itself, so if they can get people to "apply," they can actually get their work done. Still, it feels like a shift in the portrayal of data annotation as work. Mechanical Turk has been around a long time, but it has never tried to to recruit me, nor has it ever tried to make me believe that most of the people working for it are basic middle aged white people who like to travel.

For any purpose related to improving labor conditions, I think we have to recognize data annotation as skilled work. It still kind of gives me the creeps to think about this as a growth employment field. There’s something very snake eating it’s tail about having glossy websites recruiting people to click on things that other people, or more likely right now machines, created, so that other, already much richer people can make money selling machines finely tuned to create more similar things. The answer to the question about "how much work will I have available" is darkly hilarious: unlimited, until we've labeled enough that no original content will ever again be required, because we'll be able to generate it all.

Oooph. Good thing I'm going to a concert tonight.

Why is there no answer end index position? Because you can always derive what it should by getting the length of the answer itself. So they saved themselves some character spaces in memory to use to make the word “crowdworker.”

Here’s what that paper says about their data labeling: “LabelingProcess. We had contracts labeled by law students and quality-checked by experienced lawyers. These law students first went through 70-100 hours of training for labeling that was designed by experienced lawyers, so as to ensure that labels are of high quality. In the process, we also wrote extensive documentation on precisely how to identify each label category in a contract, which goes in to detail. This documentation takes up more than one hundred pages and ensures that labels are consistent.”